[ad_1]

Tesla is recalling more than two million vehicles it sold in the U.S. and 193,000 sold in Canada to fix a defective system that is supposed to ensure drivers are paying attention when they use Autopilot.

Documents posted Wednesday by U.S. safety regulators say the company will send out a software update to fix the problems, and notification letters are “expected to be mailed” to vehicle owners on Feb. 10, 2024.

The recall comes after a two-year investigation by the National Highway Traffic Safety Administration (NHTSA) into a series of crashes that happened while the Autopilot partially automated driving system was in use. Some were deadly.

The agency said its investigation found Autopilot’s method of ensuring that drivers are paying attention can be inadequate and lead to foreseeable misuse of the system.

A spokesperson for Transport Canada confirmed to CBC News that the company is conducting the same recall in Canada and that more information will be made available on its motor-vehicle safety recalls database on Wednesday afternoon.

“The recall, an over-the-air software update to enhance advanced driver assistance features, will be deployed to approximately 193,000 vehicles in Canada,” the spokesperson said in a statement.

Autopilot includes features called Autosteer and Traffic Aware Cruise Control, with Autosteer intended for use on limited access freeways when it’s not operating with a more sophisticated feature called Autosteer on City Streets.

Tesla says it will notify owners by mail and supply the software update to enhance the controls and the visual and audible alerts of the Autosteer advanced driver-assistance feature. The software update will come at no cost to customers and is expected to roll out this month, according to the company.

CBC News has reached out to Tesla for comment.

The U.S. recall covers models Y, S, 3 and X produced between Oct. 5, 2012, and Dec. 7 of this year.

The software update includes additional controls and alerts “to further encourage the driver to adhere to their continuous driving responsibility,” the documents said.

The update was to be sent to certain affected vehicles on Tuesday, with the rest getting it at a later date, the documents said.

Software update will limit autopilot steering feature

The software update apparently will limit where Autosteer can be used.

“If the driver attempts to engage Autosteer when conditions are not met for engagement, the feature will alert the driver it is unavailable through visual and audible alerts, and Autosteer will not engage,” the recall documents said.

Depending on a Tesla’s hardware, the added controls include “increasing prominence” of visual alerts, simplifying how Autosteer is turned on and off, additional checks on whether Autosteer is being used outside of controlled access roads and when approaching traffic control devices, “and eventual suspension from Autosteer use if the driver repeatedly fails to demonstrate continuous and sustained driving responsibility,” the documents say.

Recall documents say that agency investigators met with Tesla starting in October to explain “tentative conclusions” about the fixing the monitoring system. Tesla, it said, did not concur with the agency’s analysis but agreed to the recall on Dec. 5 in an effort to resolve the investigation.

Auto safety advocates for years have been calling for stronger regulation of the driver monitoring system, which mainly detects whether a driver’s hands are on the steering wheel. They have called for cameras to make sure a driver is paying attention, which are used by other automakers with similar systems.

Independent tests show system is easy to fool

Autopilot can steer, accelerate and brake automatically in its lane, but is a driver-assist system and cannot drive itself despite its name. Independent tests have found that the monitoring system is easy to fool, so much that drivers have been caught while driving drunk or even sitting in the back seat.

In its defect report filed with the safety agency, Tesla said Autopilot’s controls “may not be sufficient to prevent driver misuse.”

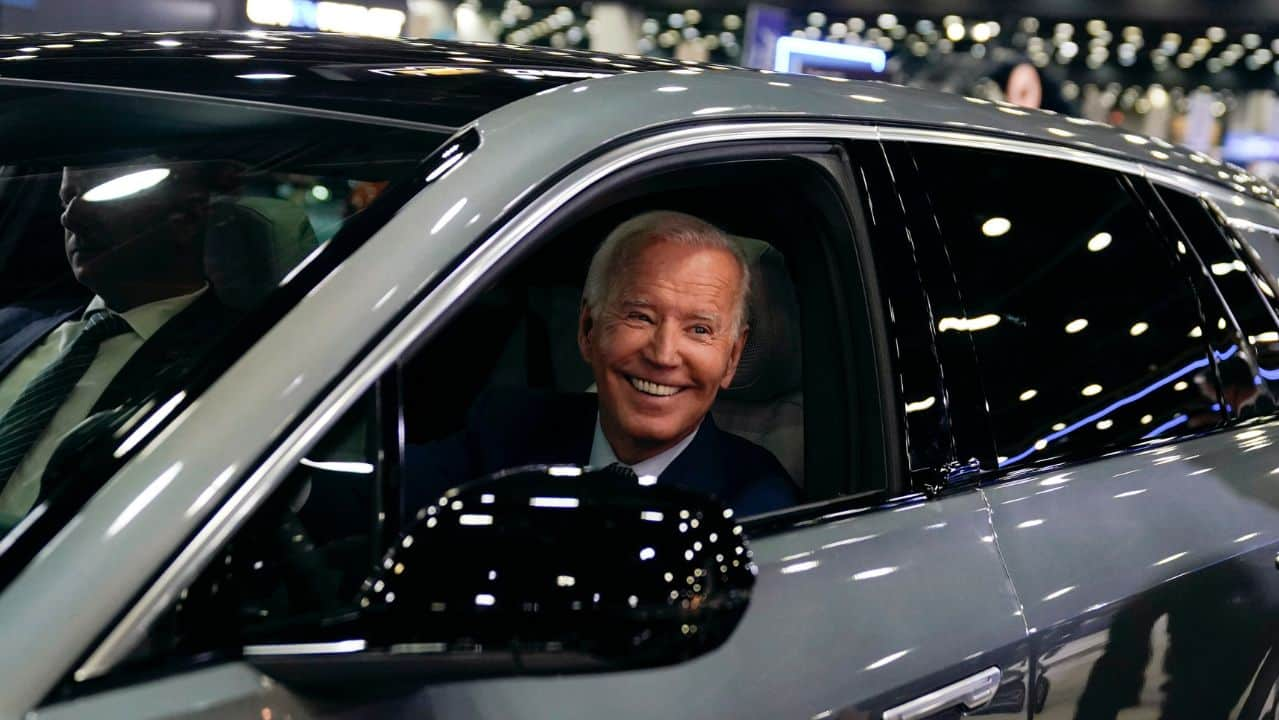

Traditional automakers and startups alike are accelerating their electric vehicle plans as demand soars due to high gas prices and U.S. President Joe Biden has announced a huge investment in building electric vehicle chargers.

Tesla says on its website that Autopilot and a more sophisticated Full Self Driving system cannot drive autonomously and are meant to help drivers who have to be ready to intervene at all times. Full Self Driving is being tested by Tesla owners on public roads.

In a statement posted Monday on X, formerly known as Twitter, Tesla said safety is stronger when Autopilot is engaged.

The Associated Press has requested further comment from the Austin, Texas, company.

NHTSA has dispatched investigators to 35 Tesla crashes since 2016 in which the agency suspects the vehicles were running on an automated system. At least 17 people have been killed.

The investigations are part of a larger probe by the NHTSA into multiple instances of Teslas using Autopilot crashing into parked emergency vehicles that are tending to other crashes.

NHTSA has become more aggressive in pursuing safety problems with Teslas in the past year, announcing multiple recalls and investigations.

[ad_2]

Source link